Two years ago, running a 70-billion-parameter language model required a cluster of A100 GPUs and a small fortune in cloud bills. Today, hobbyists are casually loading the same class of model on a single RTX 4090 — or even an M-series MacBook. The magic ingredient is quantization, and the pace of improvement has been nothing short of extraordinary.

What Quantization Actually Does

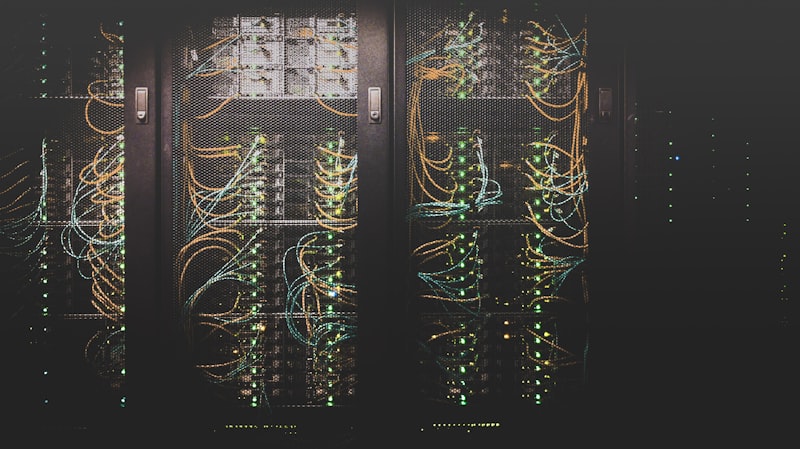

At its core, quantization reduces the numerical precision of a model's weights. A full-precision model stores each weight as a 16-bit or 32-bit floating-point number. Quantization converts these to 8-bit, 4-bit, or even 2-bit integers. The memory savings are dramatic — a 4-bit model occupies roughly a quarter of the memory of its 16-bit counterpart — and inference speeds up because smaller numbers move through hardware faster.

The Key Players: GPTQ, AWQ, and GGUF

GPTQ was among the first post-training quantization methods to show that 4-bit models could preserve most of the original model's accuracy. AWQ (Activation-aware Weight Quantization) improved on this by identifying which weight channels matter most and preserving their precision. Meanwhile, the GGUF format popularized by llama.cpp made quantized models trivially easy to download and run on CPUs and Apple Silicon without any GPU at all.

The democratization of large language models is not happening through smaller models alone — it is happening through smarter compression of the biggest ones.

Why This Matters for India's AI Community

For developers in India and other emerging markets, quantization removes the biggest barrier to experimenting with frontier AI: hardware cost. A student with a mid-range gaming laptop can now fine-tune and deploy models that rival API-only offerings. This levels the playing field and opens up local-language model development, privacy-preserving on-device AI, and edge deployment for agriculture, healthcare, and education applications.

Key takeaways from the quantization revolution:

- 4-bit quantized models retain 95-99% of original model quality for most tasks

- GGUF format enables CPU-only inference, eliminating GPU requirements entirely

- Fine-tuning quantized models with QLoRA slashes training costs by 10-50x

- Edge deployment on mobile and IoT devices is becoming practical for the first time

- Open-source tooling (llama.cpp, vLLM, ExLlamaV2) is mature and production-ready