Meta's release of Llama 4 marks a pivotal moment for the open-weight AI movement. For the first time, Meta has adopted a mixture-of-experts (MoE) architecture in its flagship open model, enabling it to deliver performance competitive with proprietary alternatives while activating only a fraction of its total parameters during inference.

Mixture-of-Experts: Why It Matters

A traditional dense model activates every parameter for every token. An MoE model routes each token to a small subset of specialized expert networks. The Llama 4 Maverick model, for example, contains hundreds of billions of total parameters but activates only a portion per token. This means you get the knowledge capacity of a massive model with the inference cost of a much smaller one.

The Open-Weight Advantage

Meta continues to release model weights under a permissive license that allows commercial use. This is strategically significant. By commoditizing the model layer, Meta drives adoption of its ecosystem while encouraging innovation at the application layer. For startups and researchers who cannot afford API-only dependencies, open weights mean sovereignty over their AI stack — they can fine-tune, deploy on-premises, and audit the model's behavior.

Open weights are not charity. They are a strategic bet that the value in AI accrues to those who control the platform and the data, not the model weights themselves.

Impact on the Indian AI Ecosystem

Why Llama 4 matters for developers in India:

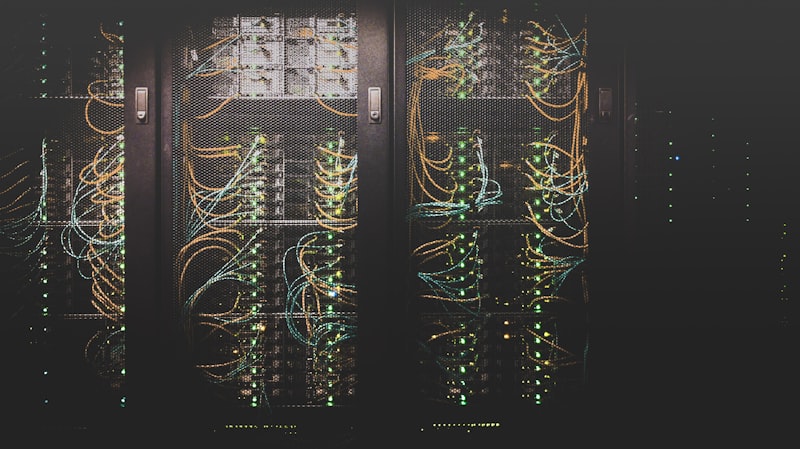

- MoE architecture reduces GPU requirements for serving, lowering cloud costs

- Open weights enable fine-tuning on Indian languages without API limitations

- On-premises deployment addresses data sovereignty concerns for regulated industries

- Community-driven quantized versions appear within days of release

- Compatible with growing Indian AI infrastructure providers and GPU clouds

The Llama 4 release reinforces a trend: the gap between open and proprietary models is shrinking. For builders focused on practical applications rather than benchmark bragging rights, open models now offer a compelling combination of capability, cost, and control.